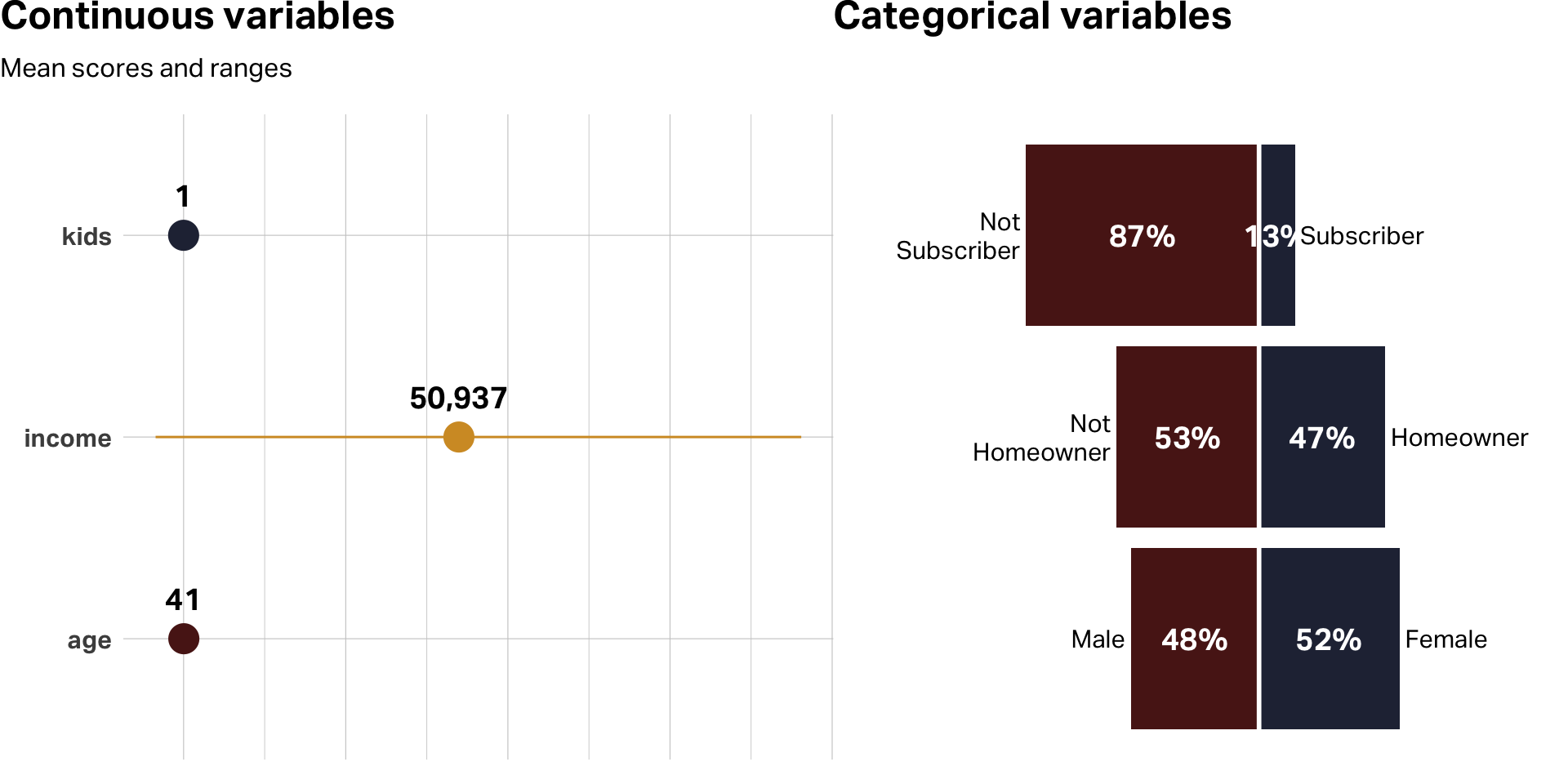

| Variable | Median | Mean | SD | Min | Max | NAs |

|---|---|---|---|---|---|---|

| age | 39 | 41.2 | 12.7 | 19 | 80 | 0 |

| female | 1 | 0.5 | 0.5 | 0 | 1 | 0 |

| income | 52,014 | 50,936.5 | 20,137.5 | −5,183 | 114,278 | 0 |

| kids | 1 | 1.3 | 1.4 | 0 | 7 | 0 |

| own_home | 0 | 0.5 | 0.5 | 0 | 1 | 0 |

| subscribe | 0 | 0.1 | 0.3 | 0 | 1 | 0 |

Clustering Customer Data

Overview

Using unsupervised learning techniques to divide customers into meaningful groups.

Presented by:

Larry Vincent,

Professor of the Practice

Marketing

Larry Vincent,

Professor of the Practice

Marketing

Presented to:

MKT 512

February 19, 2026

MKT 512

February 19, 2026

Why clustering?

- Identify natural groupings among survey respondents based on shared patterns in their responses.

- Reveal structure in your data that isn’t visible from summary statistics alone—who thinks alike, and how?

- Help you move from “what does the average respondent think?” to “what do different types of respondents think?”

- Strengthen your analysis by showing whether key attitudes, behaviors, or preferences vary meaningfully across groups.

- Provide a foundation for richer storytelling—clusters give you characters, not just numbers.

Key ideas from the reading

- Clustering is unsupervised. There is no dependent variable. You’re asking the algorithm to find natural groupings, not predict an outcome.

- Choose what to cluster on carefully. Don’t dump everything in by default. Select the variables that represent the differences you care about.

- Best practice is to standardize variables, particularly if they have significantly different dimensions. This is especially true for k-means, which is sensitive to outliers.

- Clustering algorithms will always produce clusters, even if the data doesn’t contain real or logical groups.

Case example

Le Abode

- Le Abode is a fictional blog and e-commerce brand known for vintage-inspired, limited-edition home furnishings that sell out.

- Endorsed by influential figures; strong growth from consumers investing more in home environments.

- The team is evaluating a unique customer priority program and wants to understand who is the best target audience.

- Dataset includes demographics such as age, income, home ownership, household size (kids), gender, and subscription to Le Abode’s priority reservation program.

Data inspection

Analysis questions

- Any consistent patterns? If so, what are they?

- What are differences in segments in each model?

- How actionable are the segmentation suggestions for each model? Could you target customers with these suggestions? Would they be useful in developing or improving marketing mix?

Considerations

- Which data used for clustering?

- Which clustering method?

- How many clusters?

- How to preprocess the data?

- How to interpret the results?

K-Means

K-means clustering

- Partitions data into k distinct clusters by assigning each data point to the cluster with the nearest centroid (mean).

- Best suited when working with large datasets, numeric variables, and when the number of clusters (k) can be specified in advance.

- Differentiated from other methods by its computational efficiency and simplicity, although it’s sensitive to outliers and initial centroid placement.

- Requires standardizing variables; especially when they have varying dimensions. Multiple runs with different initializations recommended to ensure stability and consistency.

- Requires a random seed for reproducibility.

Different data types

and scales

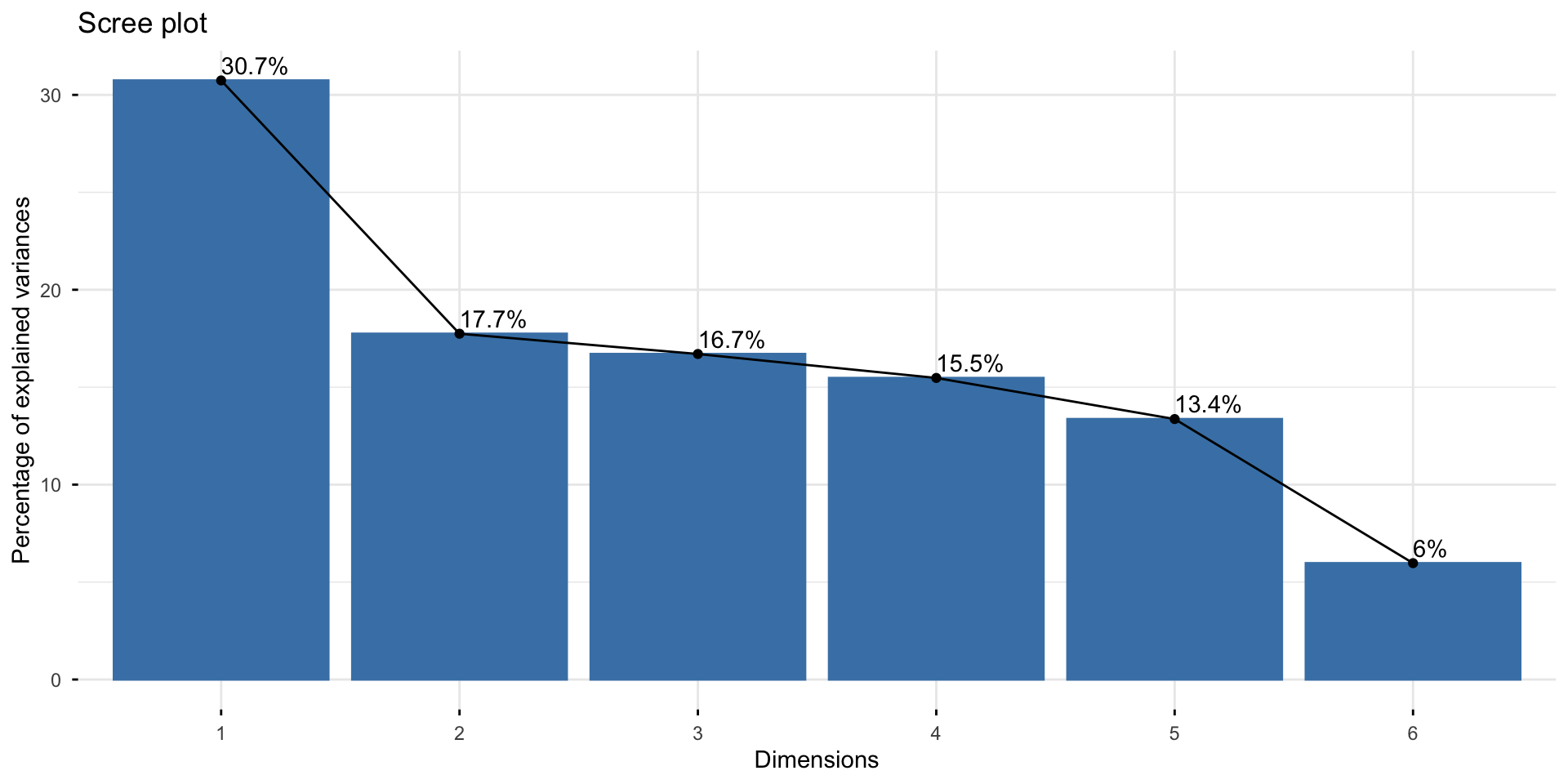

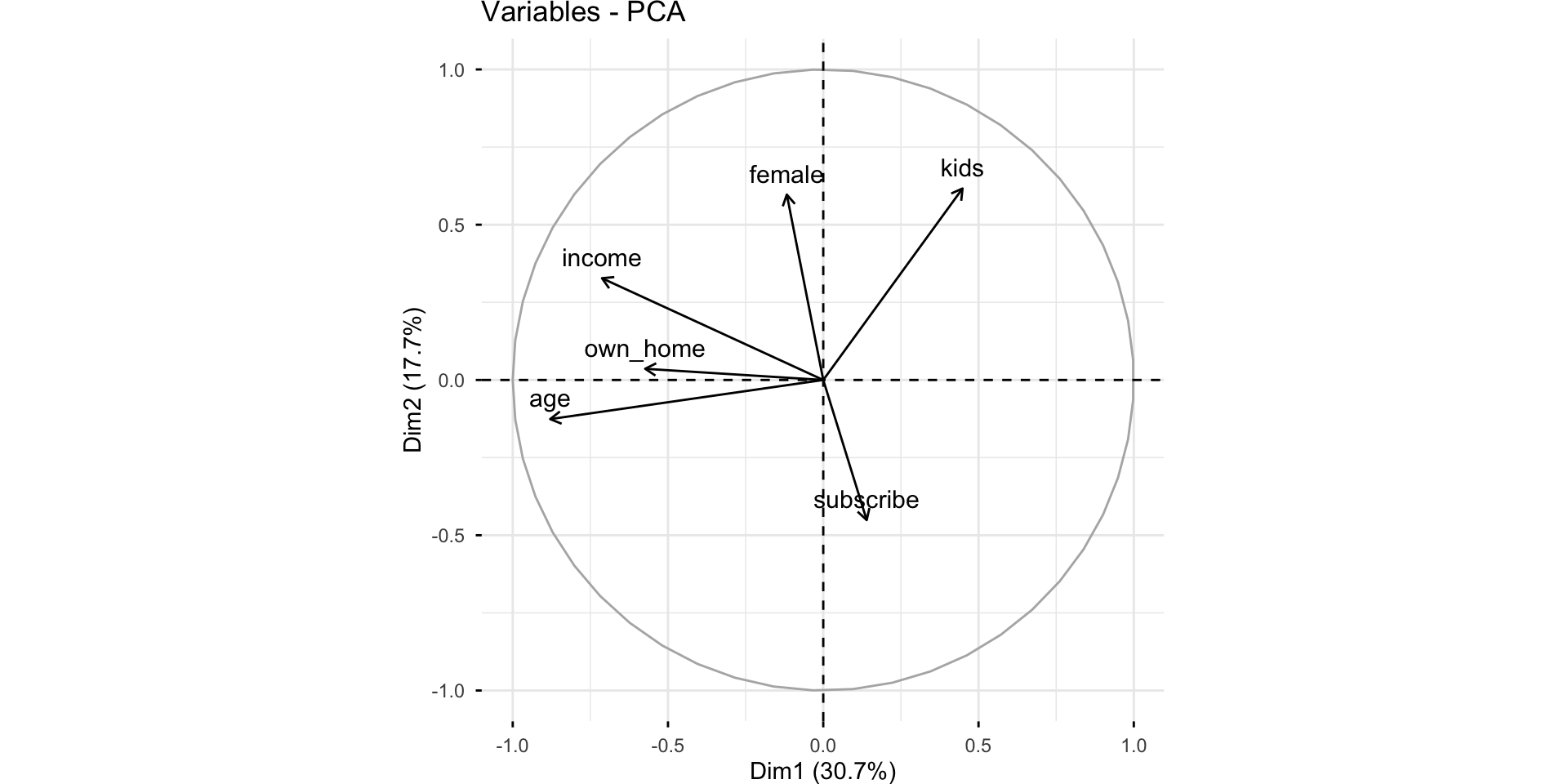

Pre-Processing with PCA

PCA

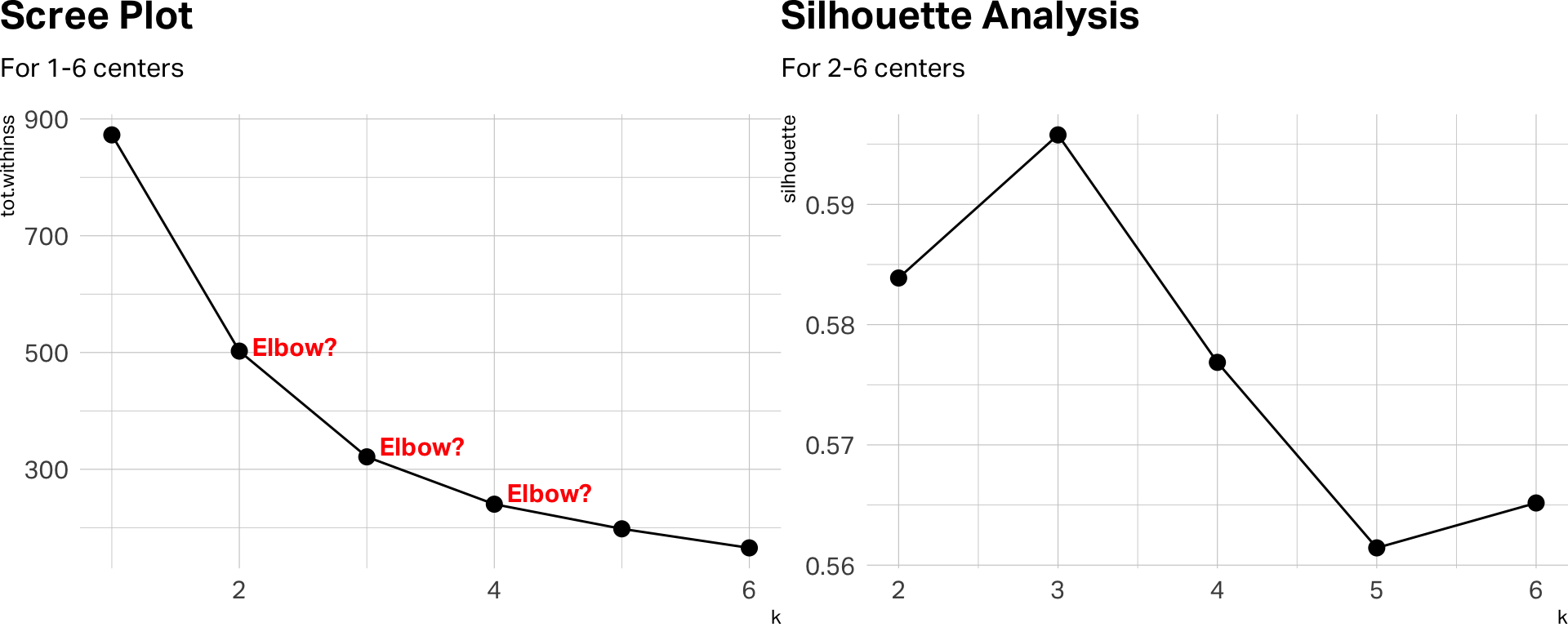

How many centers?

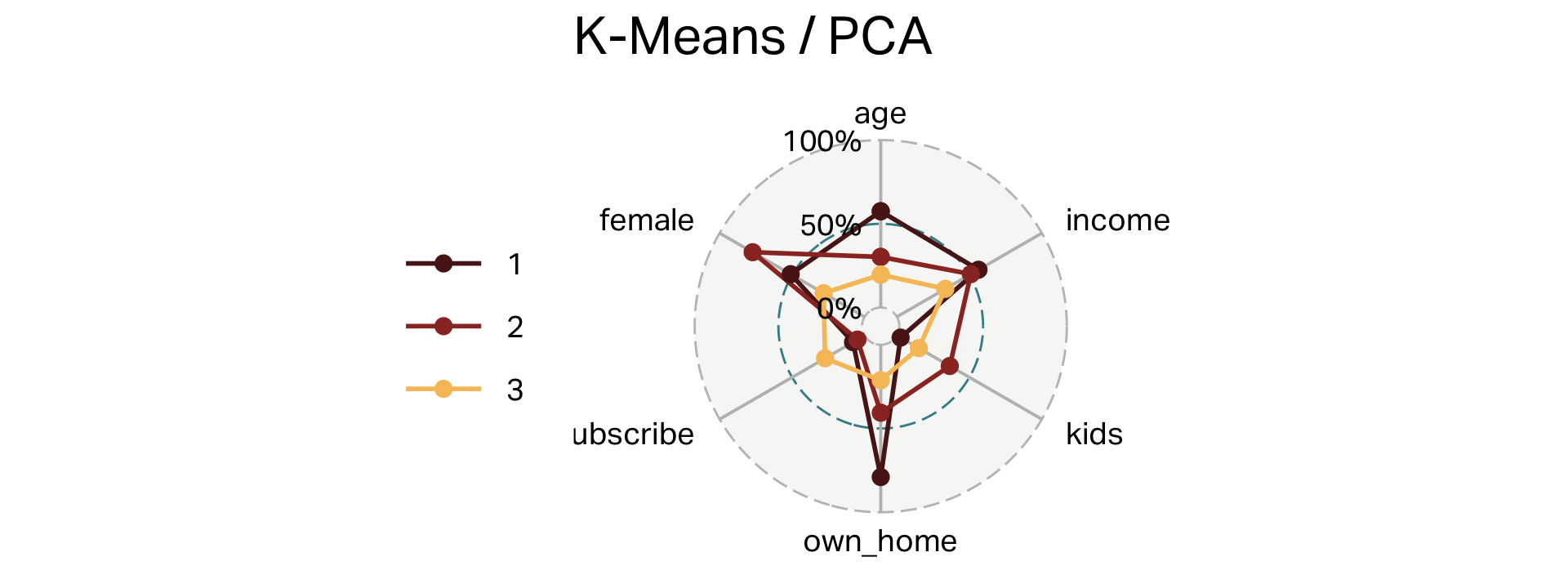

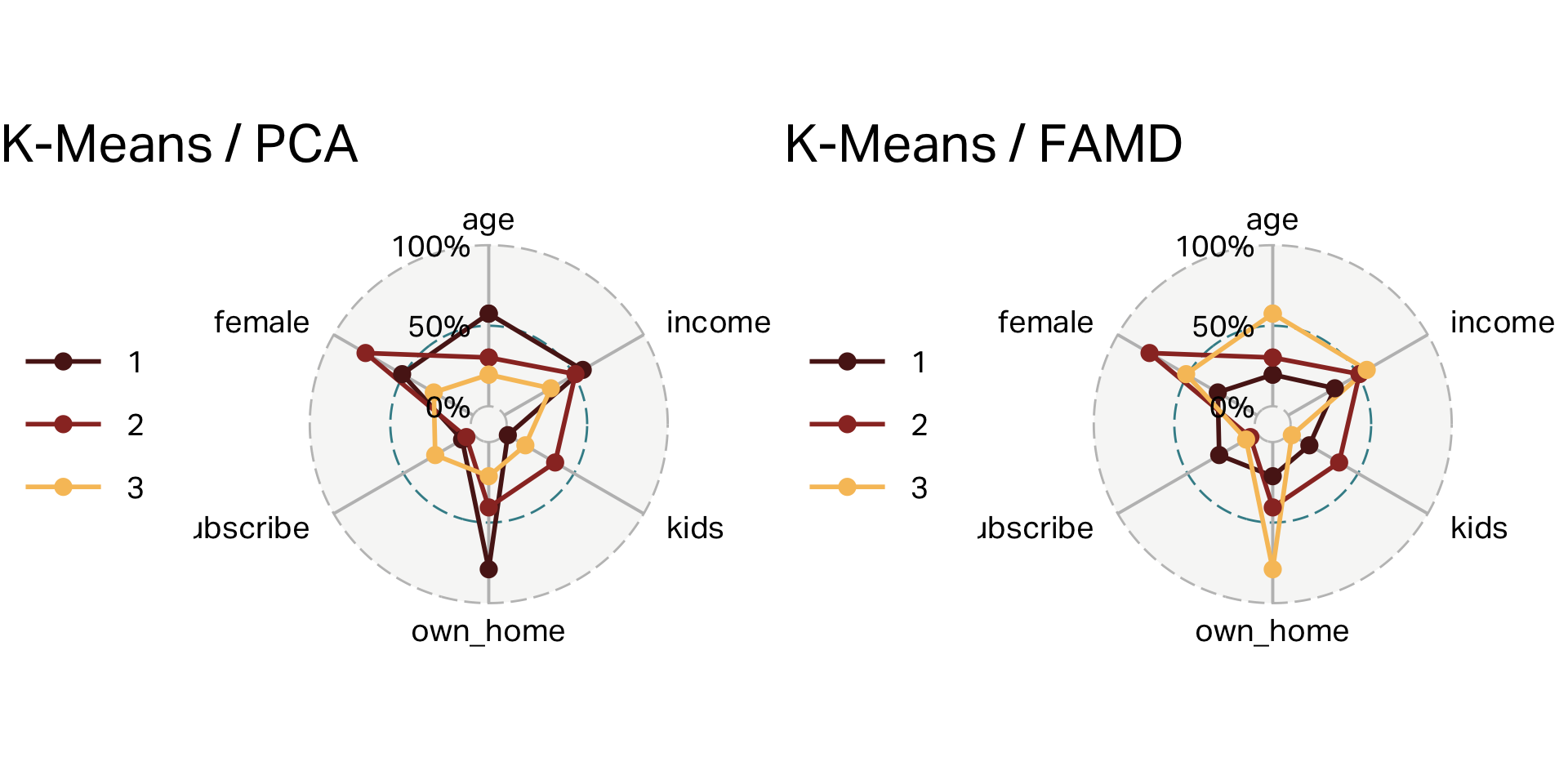

Three clusters (K-means)

| cluster | n | age | income | kids | own_home | subscribe | female |

|---|---|---|---|---|---|---|---|

| 1 | 100 | 55 | 60,107 | 0 | 79% | 8% | 51% |

| 2 | 101 | 38 | 56,016 | 3 | 41% | 5% | 77% |

| 3 | 99 | 28 | 28,270 | 1 | 21% | 27% | 28% |

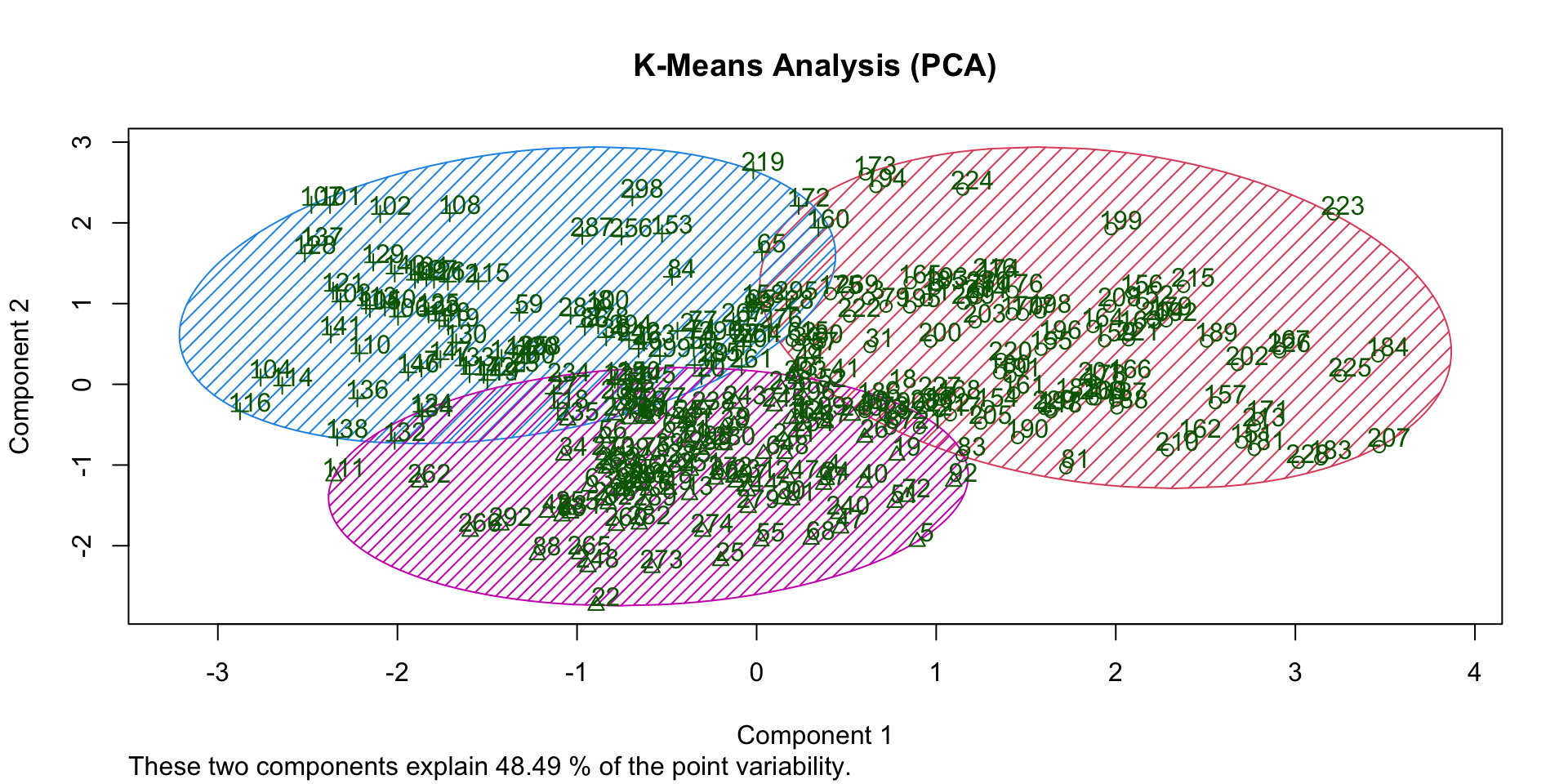

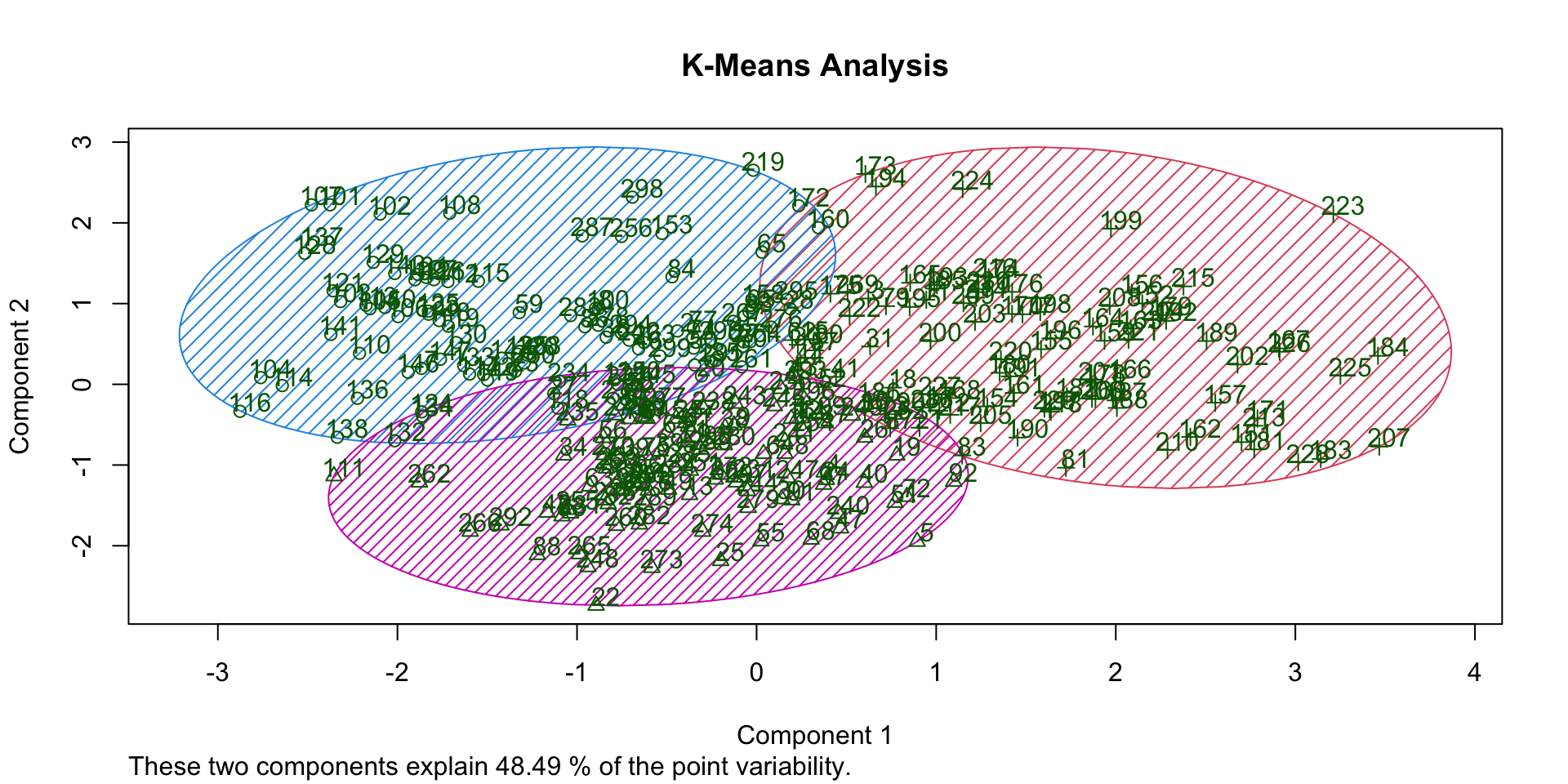

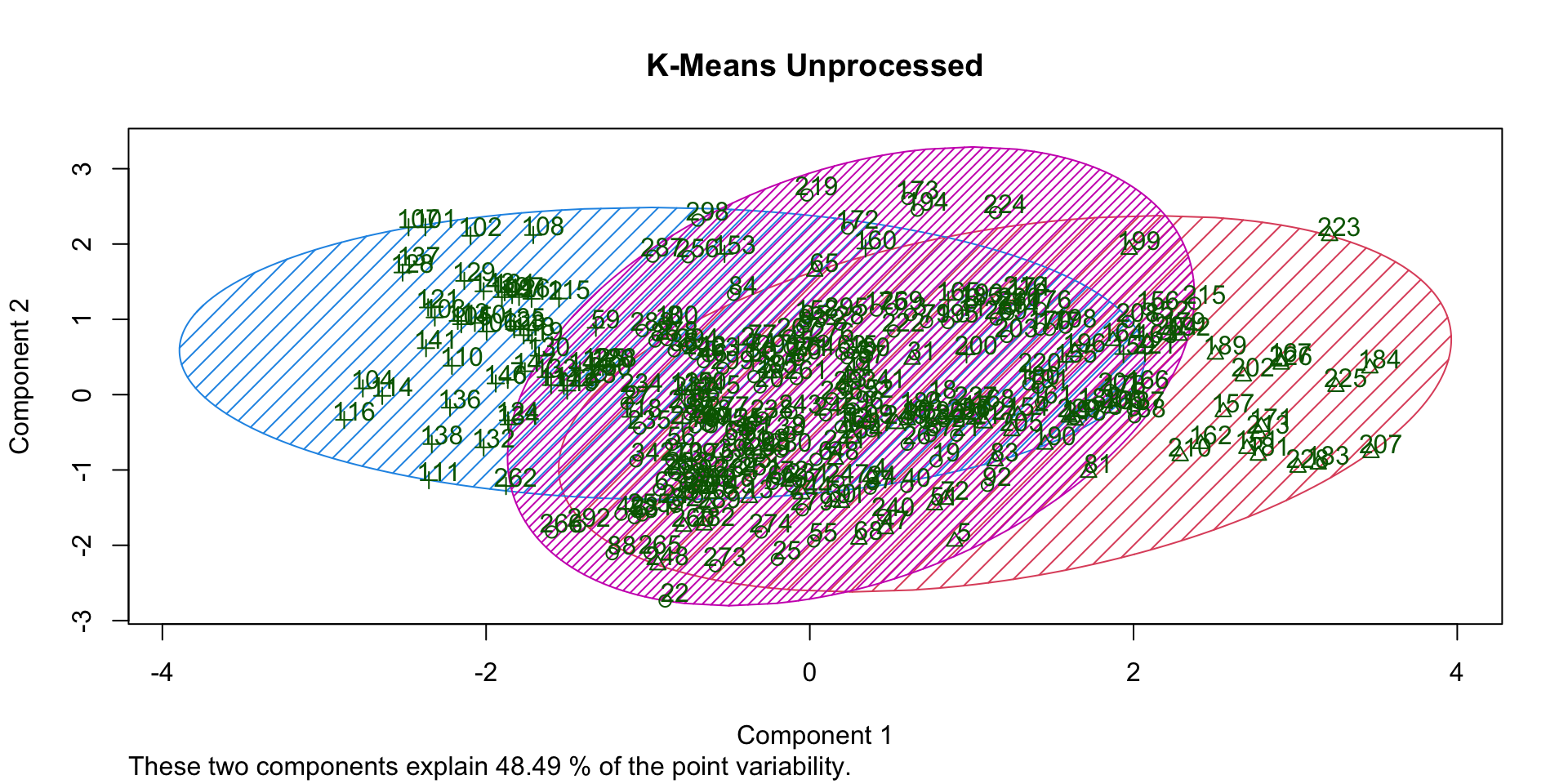

Overlap (K-Means)

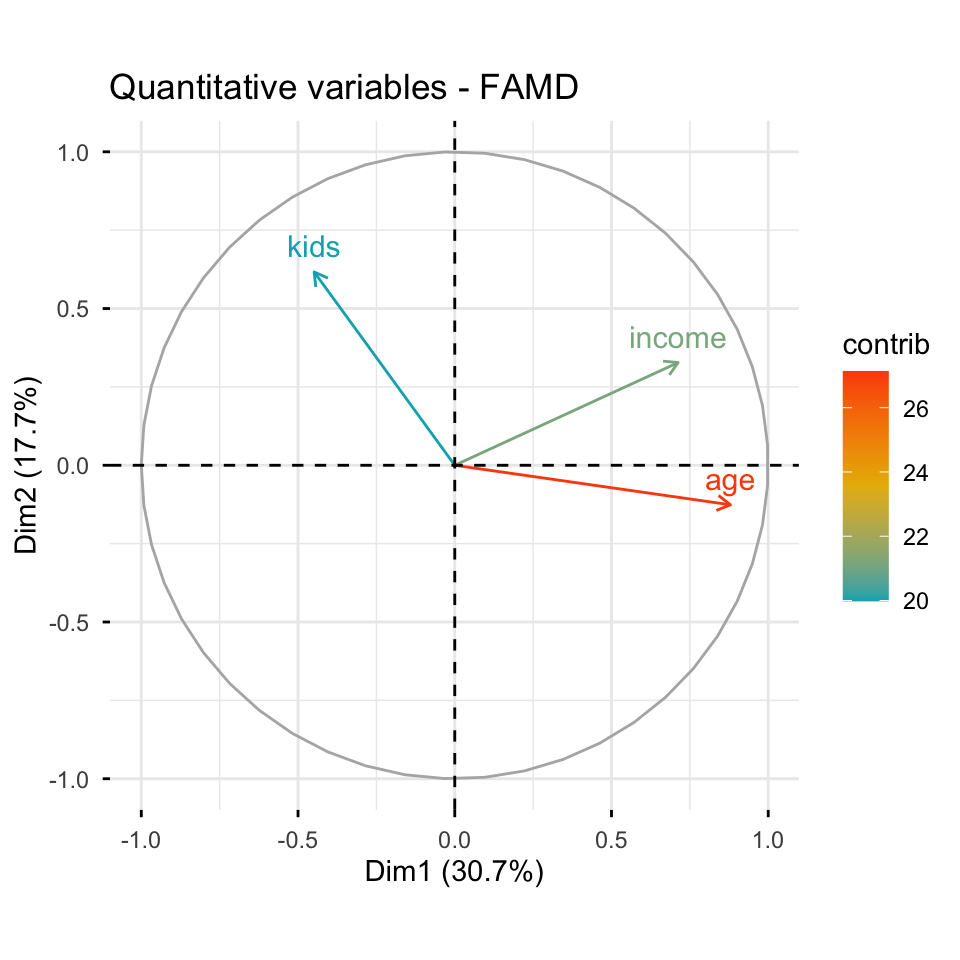

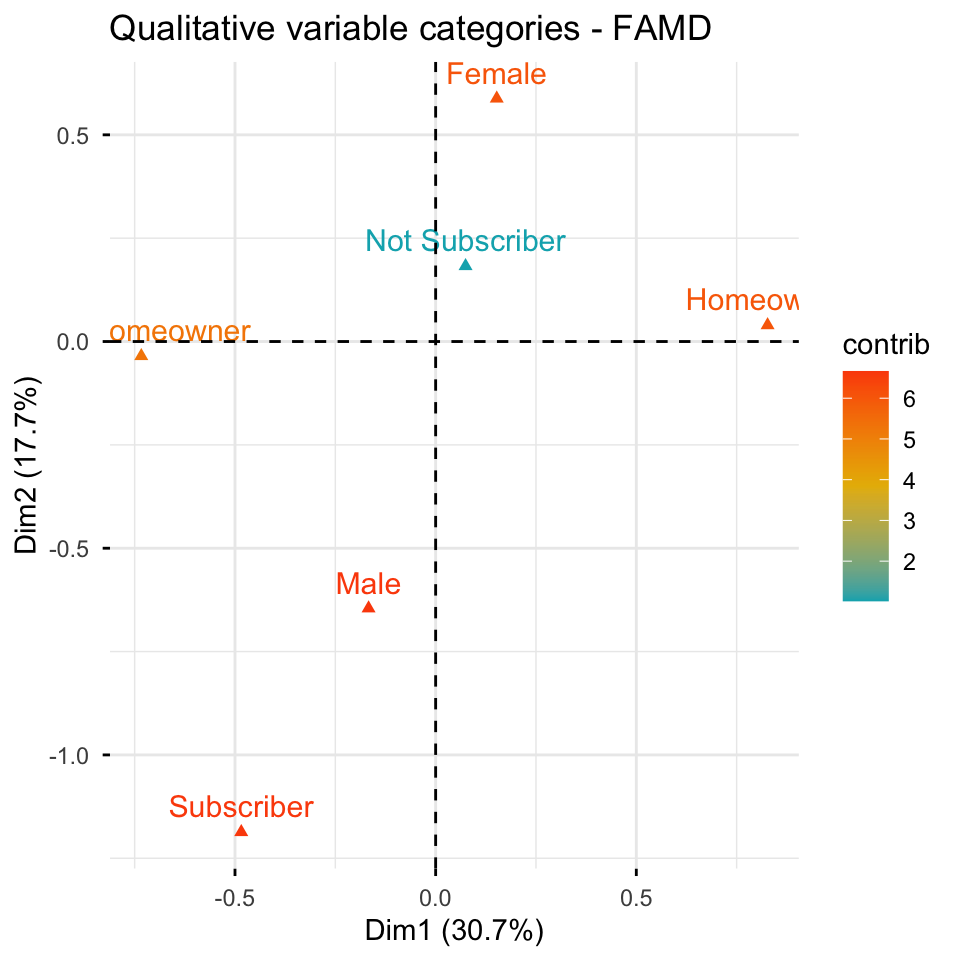

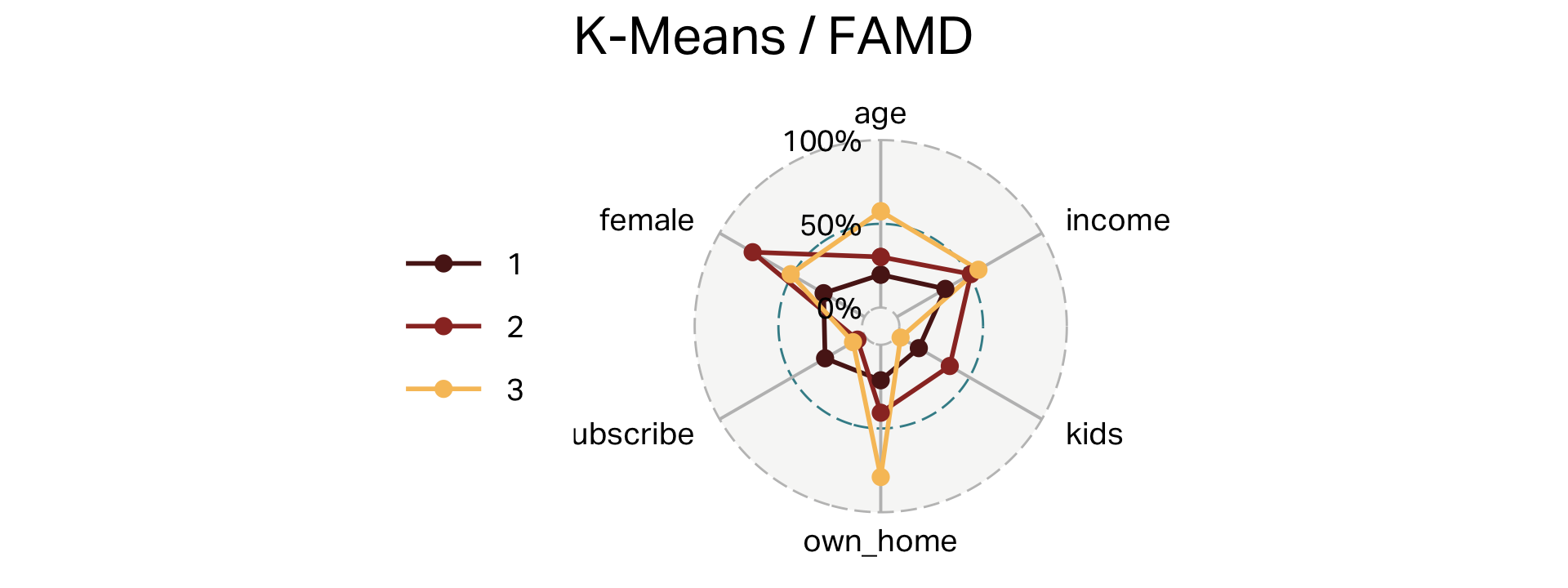

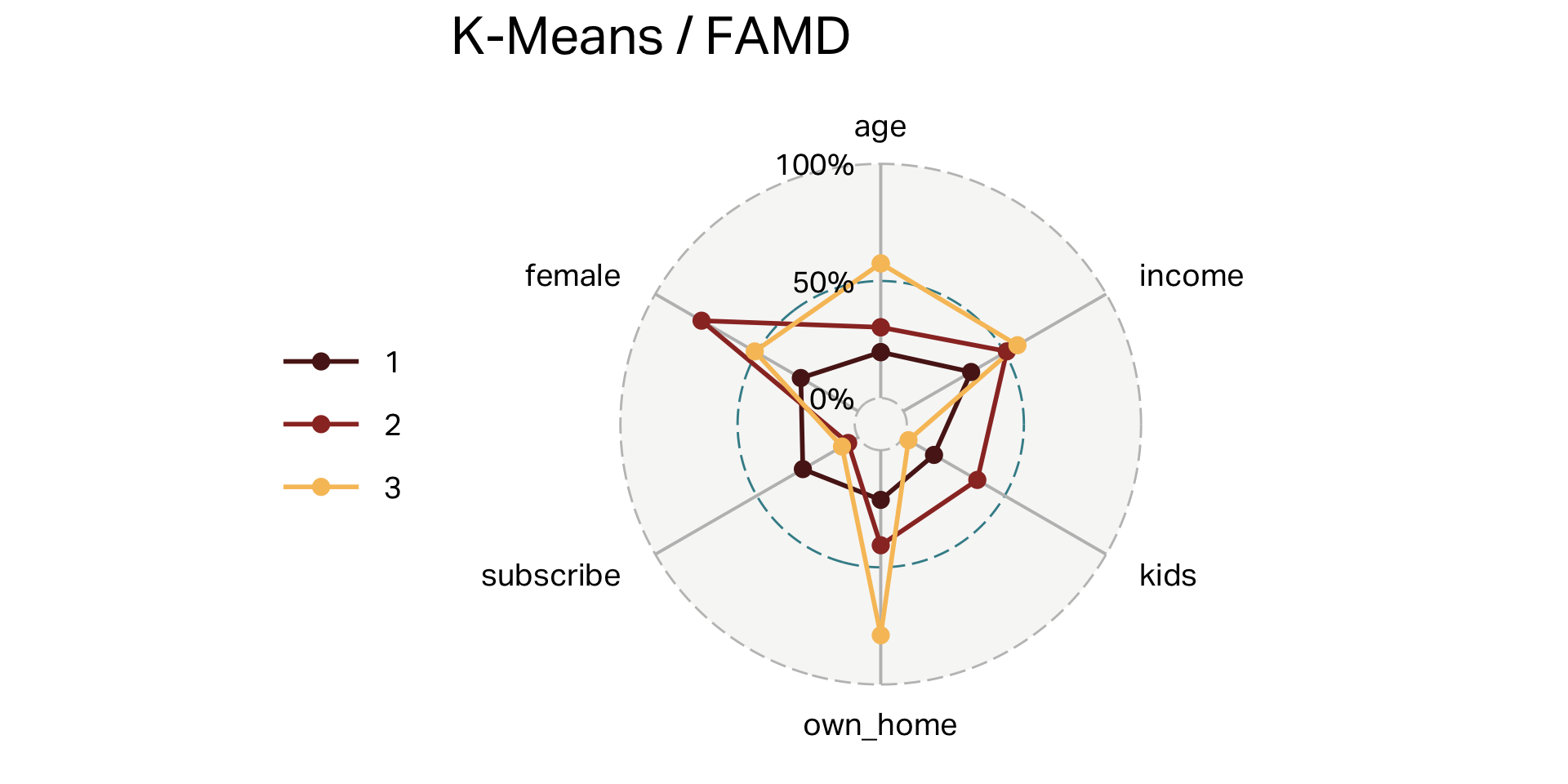

K-Means with FAMD

- Factor Analysis of Mixed Data (FAMD) is a dimension-reduction technique specifically designed for datasets containing both continuous (numeric) and discrete (categorical) variables.

- Best suited as an alternative to PCA when dealing with mixed variable types.

- Differentiated from PCA by handling categorical variables directly, capturing associations between numeric and categorical variables effectively.

- Results can be visualized similarly to PCA; scaling or standardizing numeric variables remains important; optimal when interpreting relationships across diverse data types.

FAMD

Three clusters (FAMD)

| cluster | n | age | income | kids | own_home | subscribe | female |

|---|---|---|---|---|---|---|---|

| 1 | 99 | 28 | 28,270 | 1 | 21% | 27% | 28% |

| 2 | 101 | 38 | 56,016 | 3 | 41% | 5% | 77% |

| 3 | 100 | 55 | 60,107 | 0 | 79% | 8% | 51% |

Overlap (FAMD)

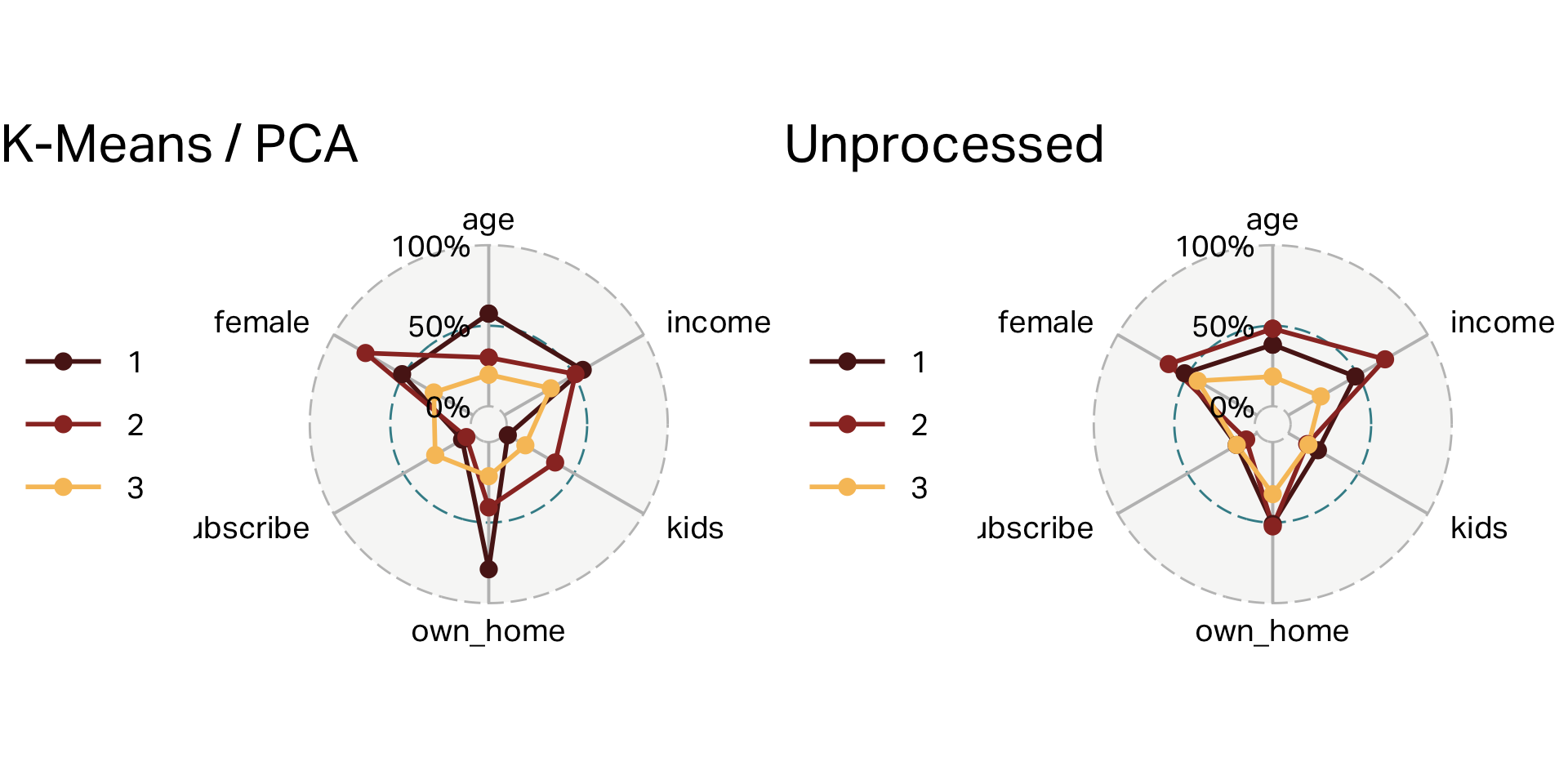

Comparison

What if we didn’t process at all?

K-Means with no pre-processing

| cluster | n | age | income | kids | own_home | subscribe | female |

|---|---|---|---|---|---|---|---|

| 1 | 169 | 41 | 52,298 | 1.00 | 0.51 | 0.15 | 0.52 |

| 2 | 63 | 48 | 73,241 | 0.00 | 0.52 | 0.08 | 0.63 |

| 3 | 68 | 25 | 22,979 | 1.00 | 0.32 | 0.15 | 0.43 |

K-Means with no pre-processing

Overlap (no processing)

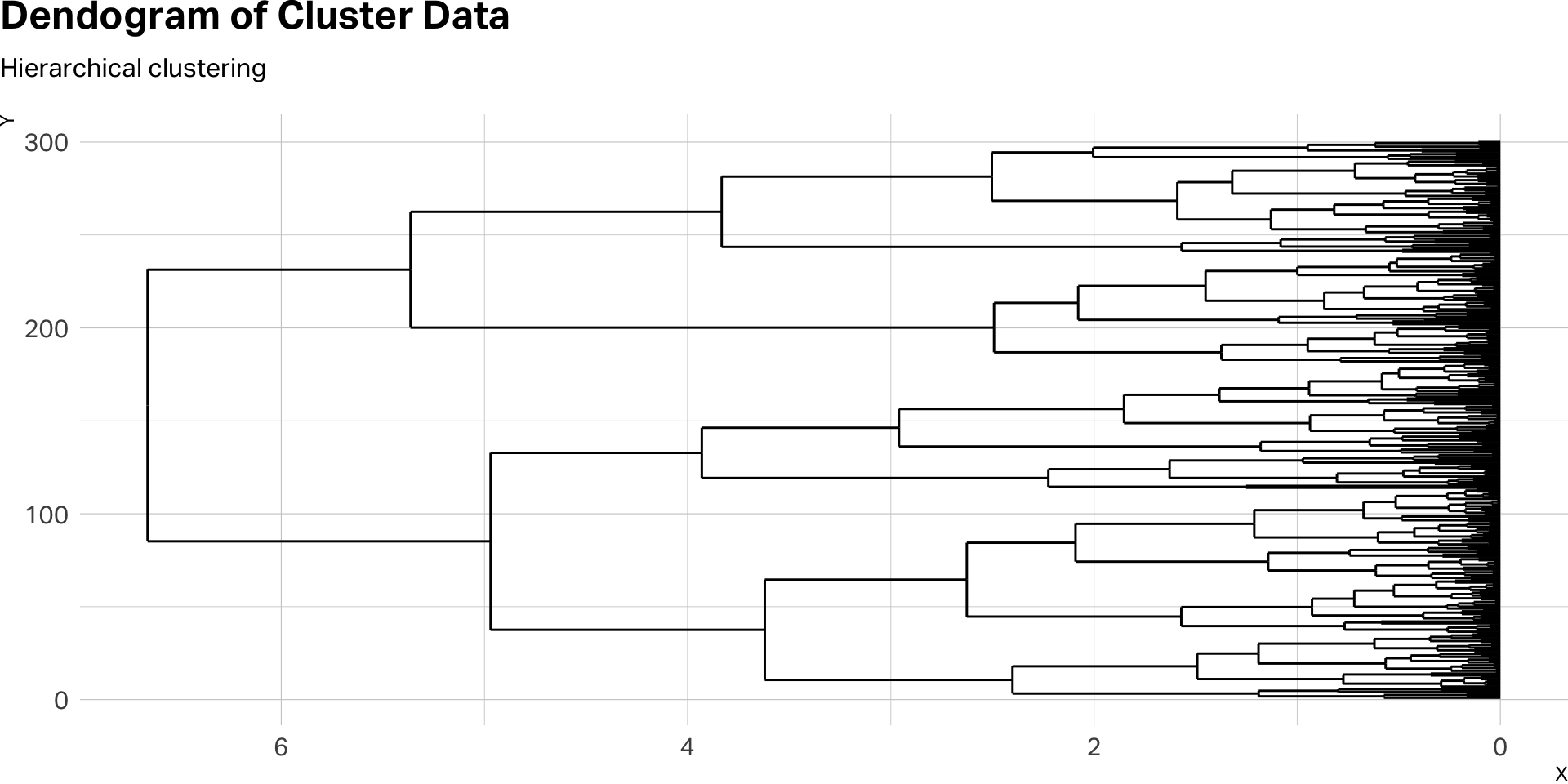

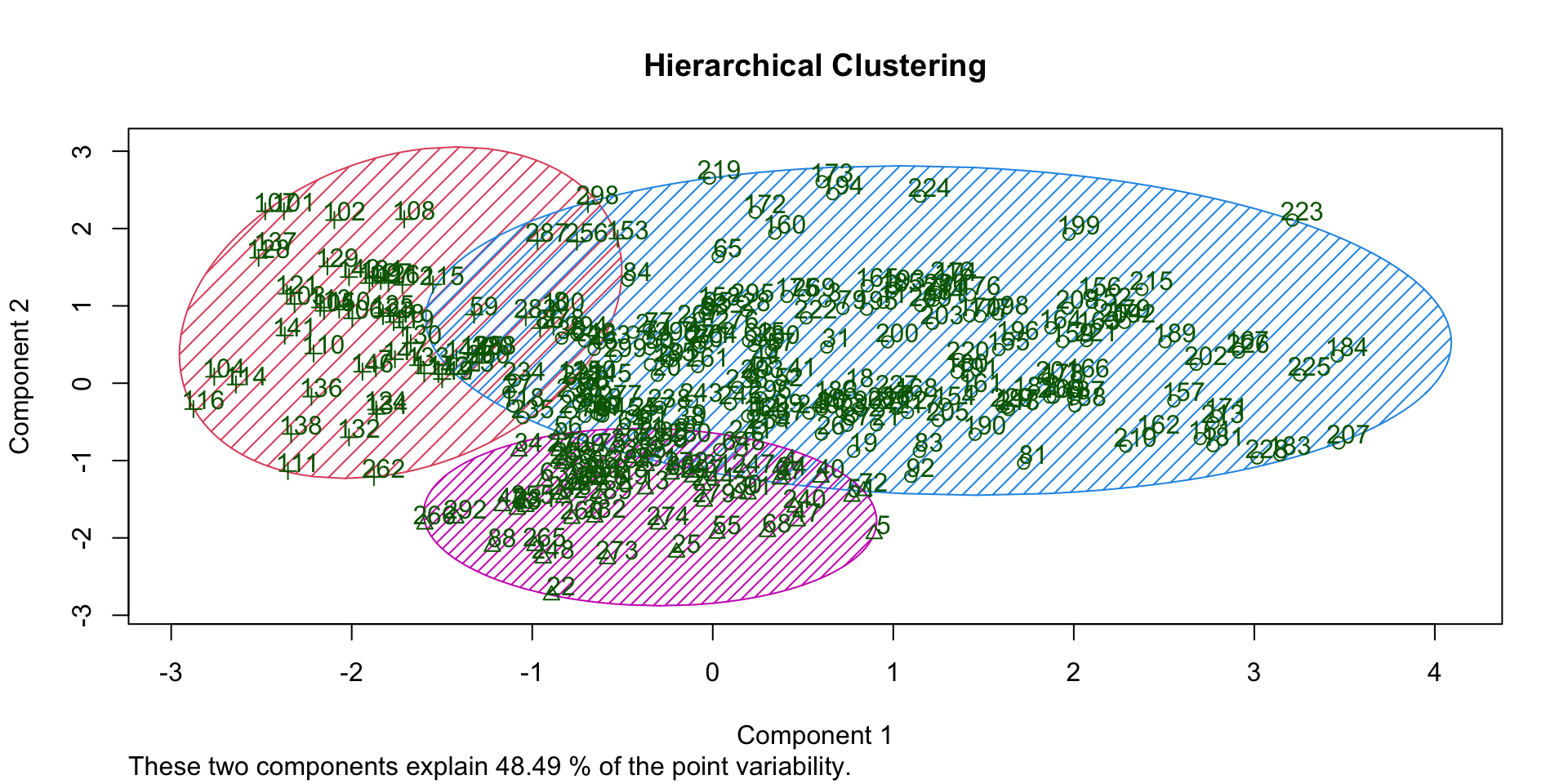

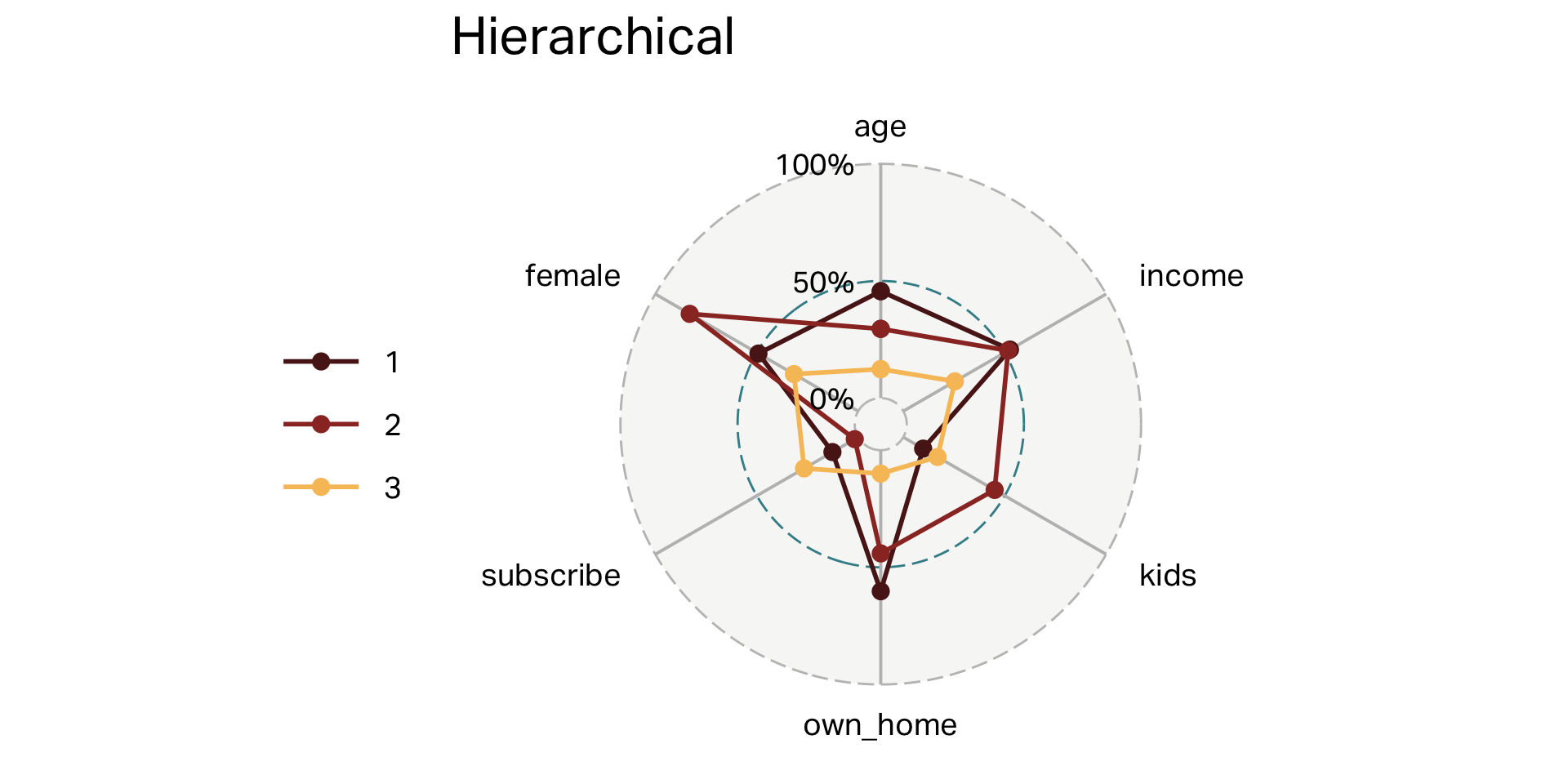

Hierarchical clustering

Hierarchical clustering

- Hierarchical clustering is a method that builds nested clusters by iteratively merging or splitting data points based on similarity, resulting in a dendrogram that visualizes cluster relationships.

- Best suited when the number of clusters is unknown upfront or when visualizing cluster structures at multiple levels of granularity is valuable.

- Differentiated from other methods (like k-means or PAM) by its hierarchical, tree-like structure, allowing flexible selection of the number of clusters after analysis.

- Computationally demanding for very large datasets; sensitive to chosen distance metrics and linkage criteria (e.g., single, complete, average linkage).

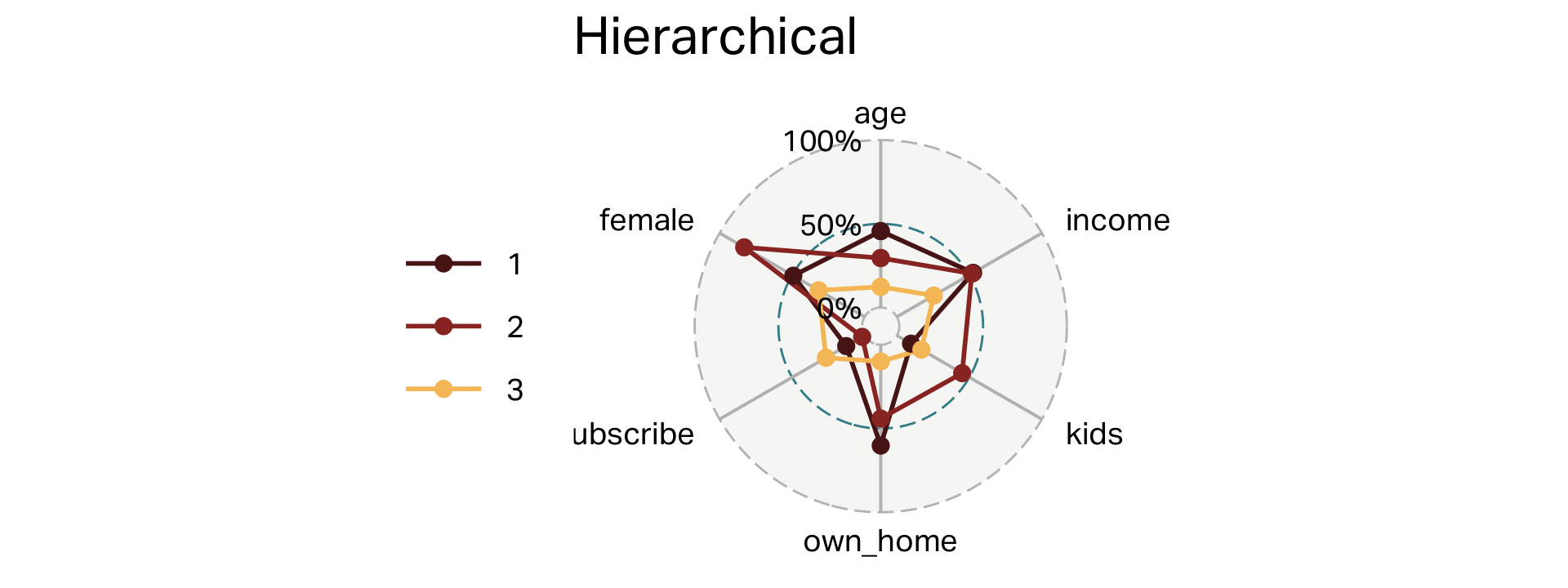

Dendograms

Clusters (HC)

| segment | n | age | income | kids | own_home | subscribe | female |

|---|---|---|---|---|---|---|---|

| 1 | 181 | 45 | 57,037 | 0 | 60% | 13% | 49% |

| 2 | 59 | 38 | 54,509 | 3 | 44% | 2% | 83% |

| 3 | 60 | 25 | 23,116 | 1 | 10% | 27% | 32% |

Overlap (HC)

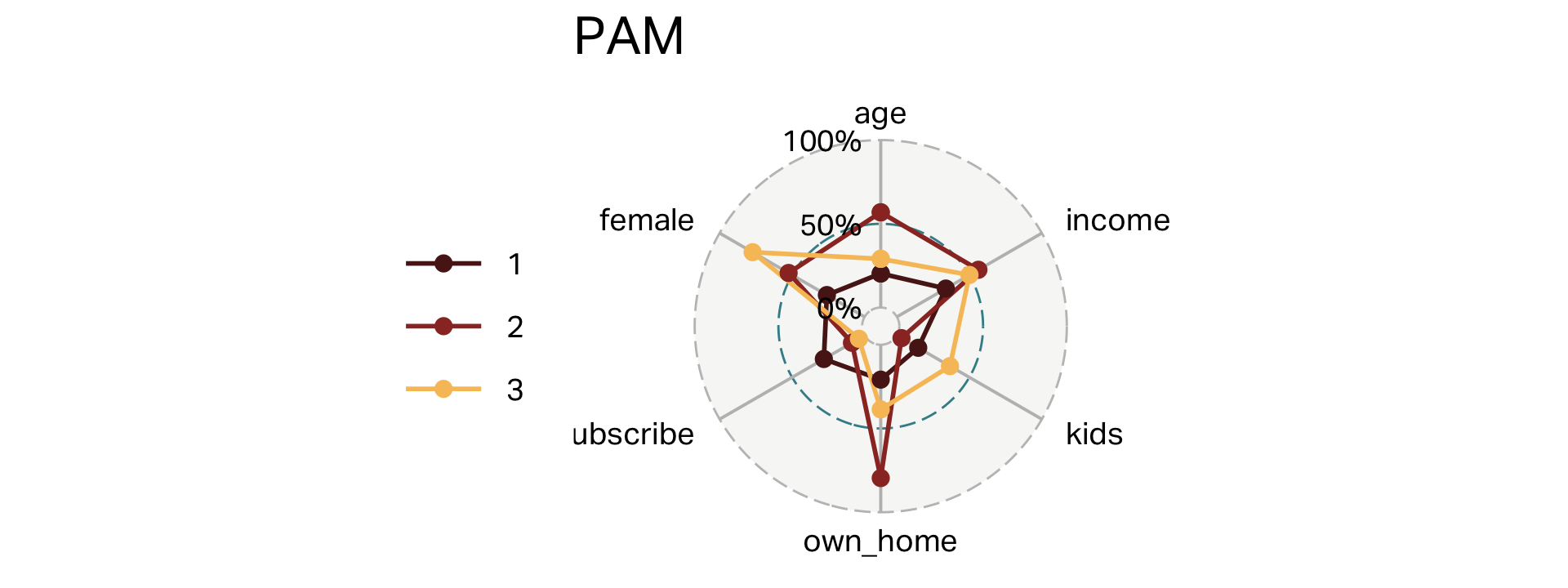

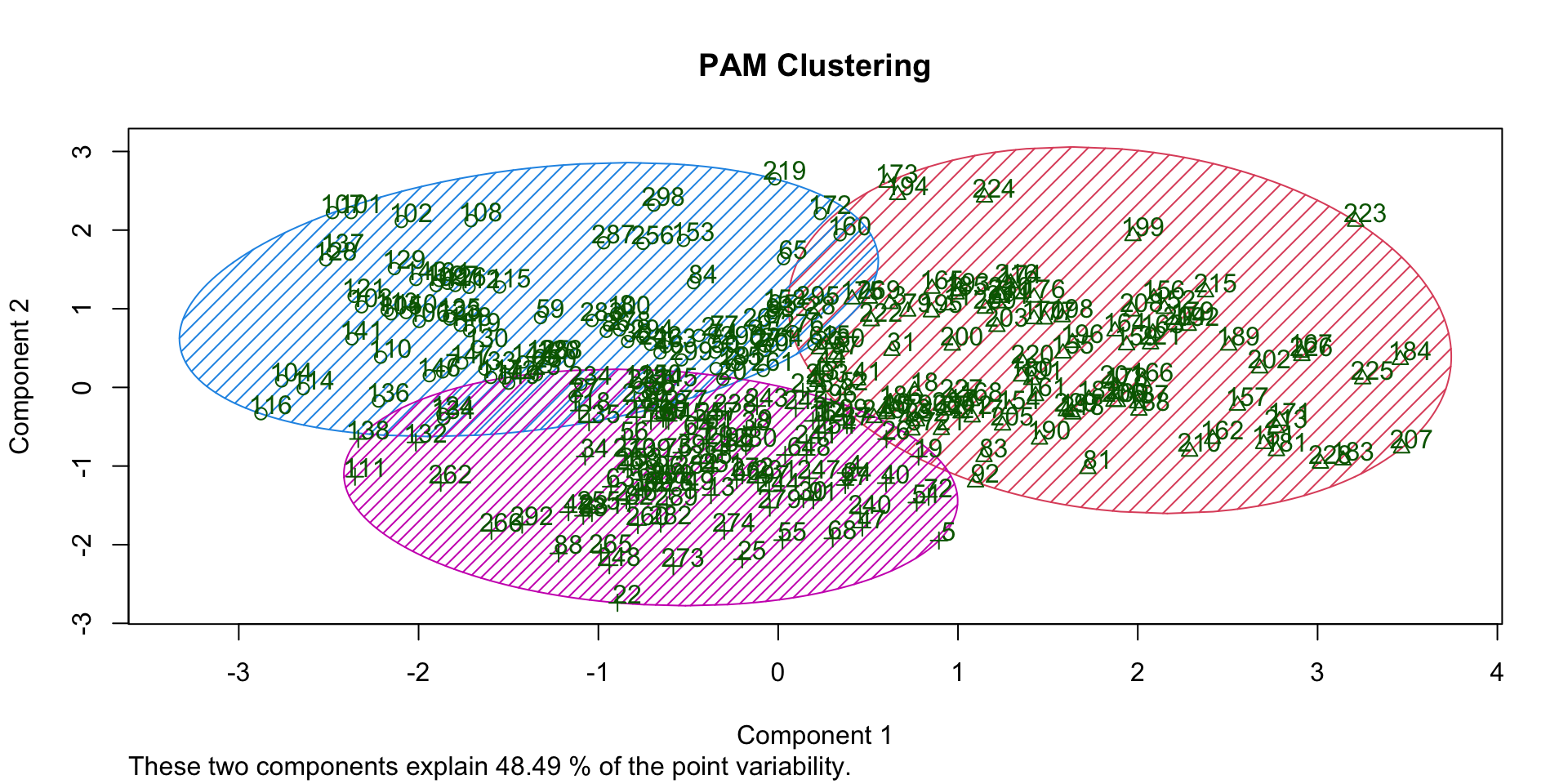

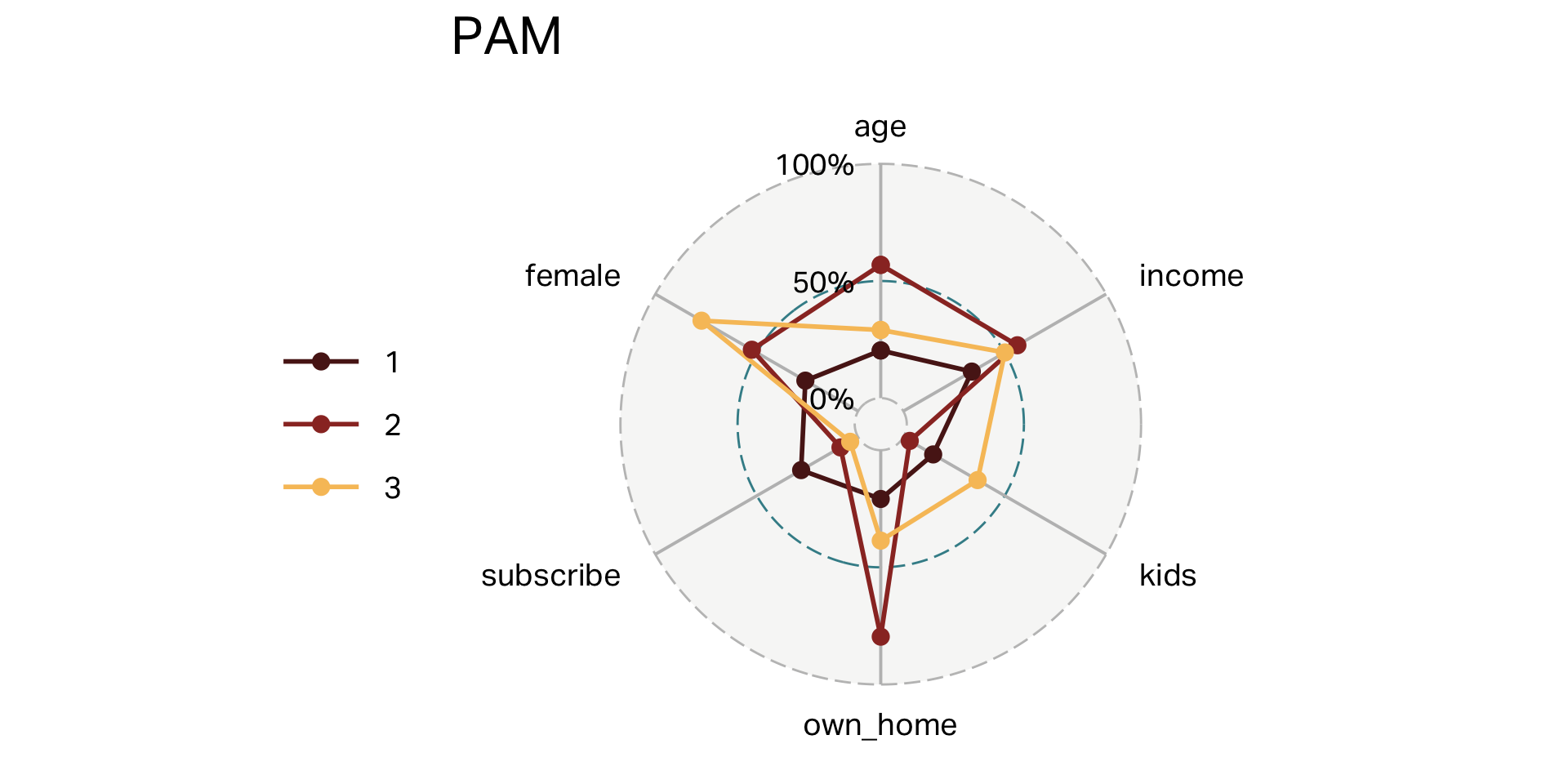

PAM clustering

PAM

- Partitioning Around Medoids (PAM) is a clustering algorithm that groups data points around representative medoids—actual data points that serve as cluster centers.

- Best suited for situations with smaller datasets or when data contains outliers, as medoids are robust and less sensitive to extreme values.

- Differentiated from methods like k-means by using real data points as cluster centers (medoids), rather than computed averages (centroids), enhancing interpretability and stability.

- Computationally intensive on large datasets; consider using faster variants (e.g., CLARA) for bigger samples.

Clusters (PAM)

| segment | n | age | income | kids | own_home | subscribe | female |

|---|---|---|---|---|---|---|---|

| 1 | 96 | 31 | 28,793 | 1 | 21% | 28% | 26% |

| 2 | 103 | 54 | 60,168 | 0 | 80% | 9% | 52% |

| 3 | 101 | 38 | 55,847 | 3 | 39% | 4% | 77% |

Overlap (PAM)

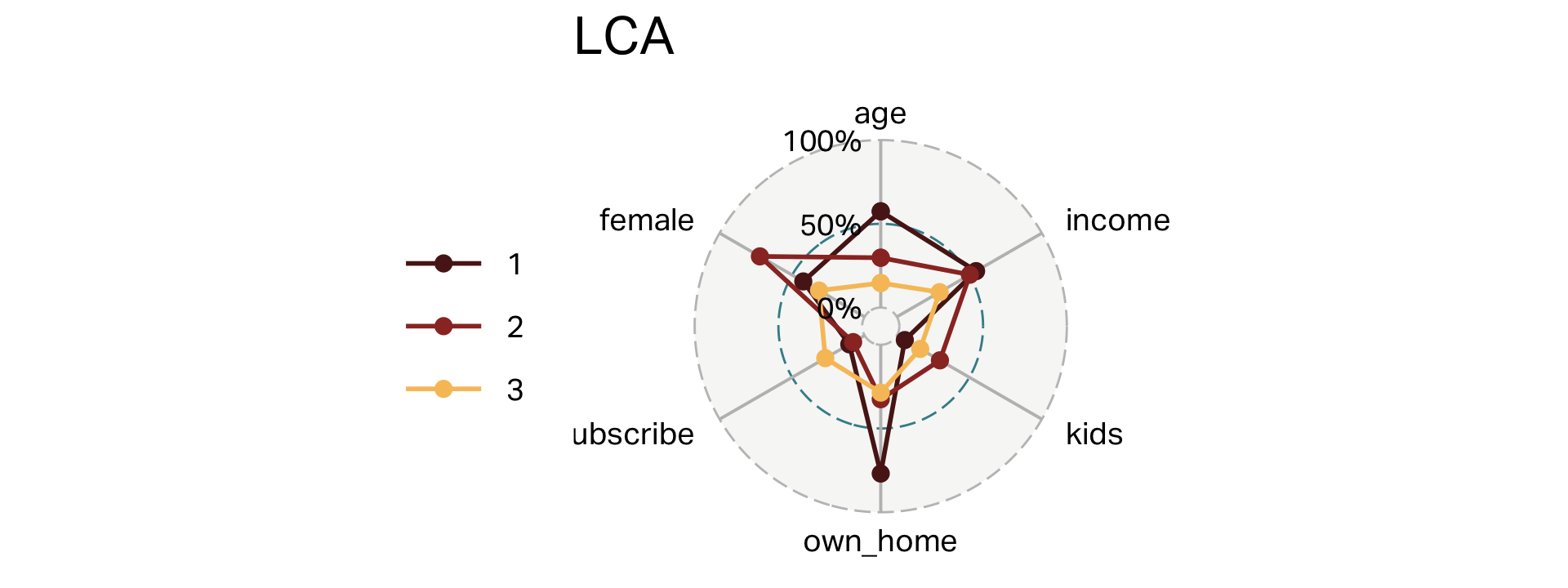

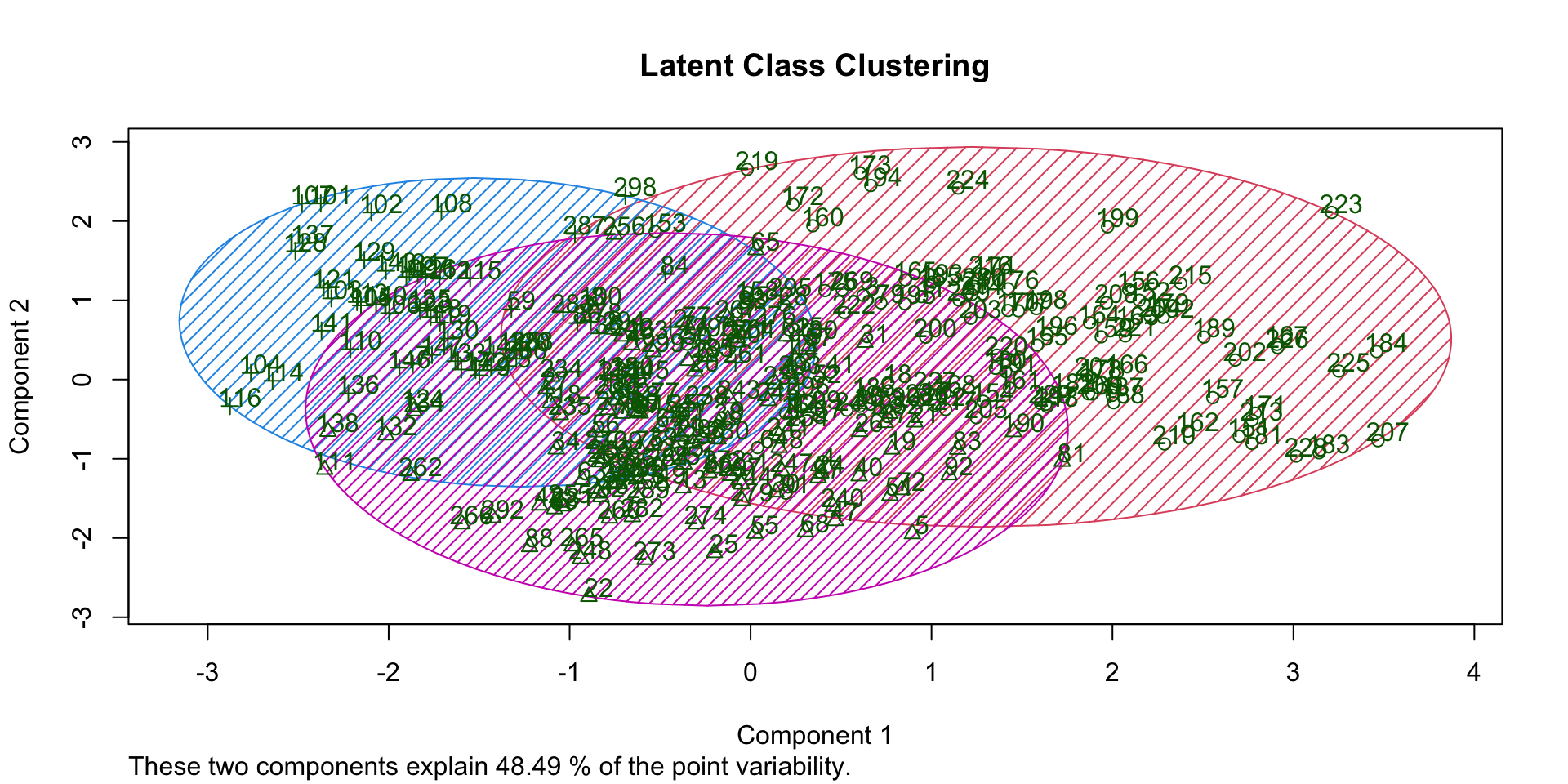

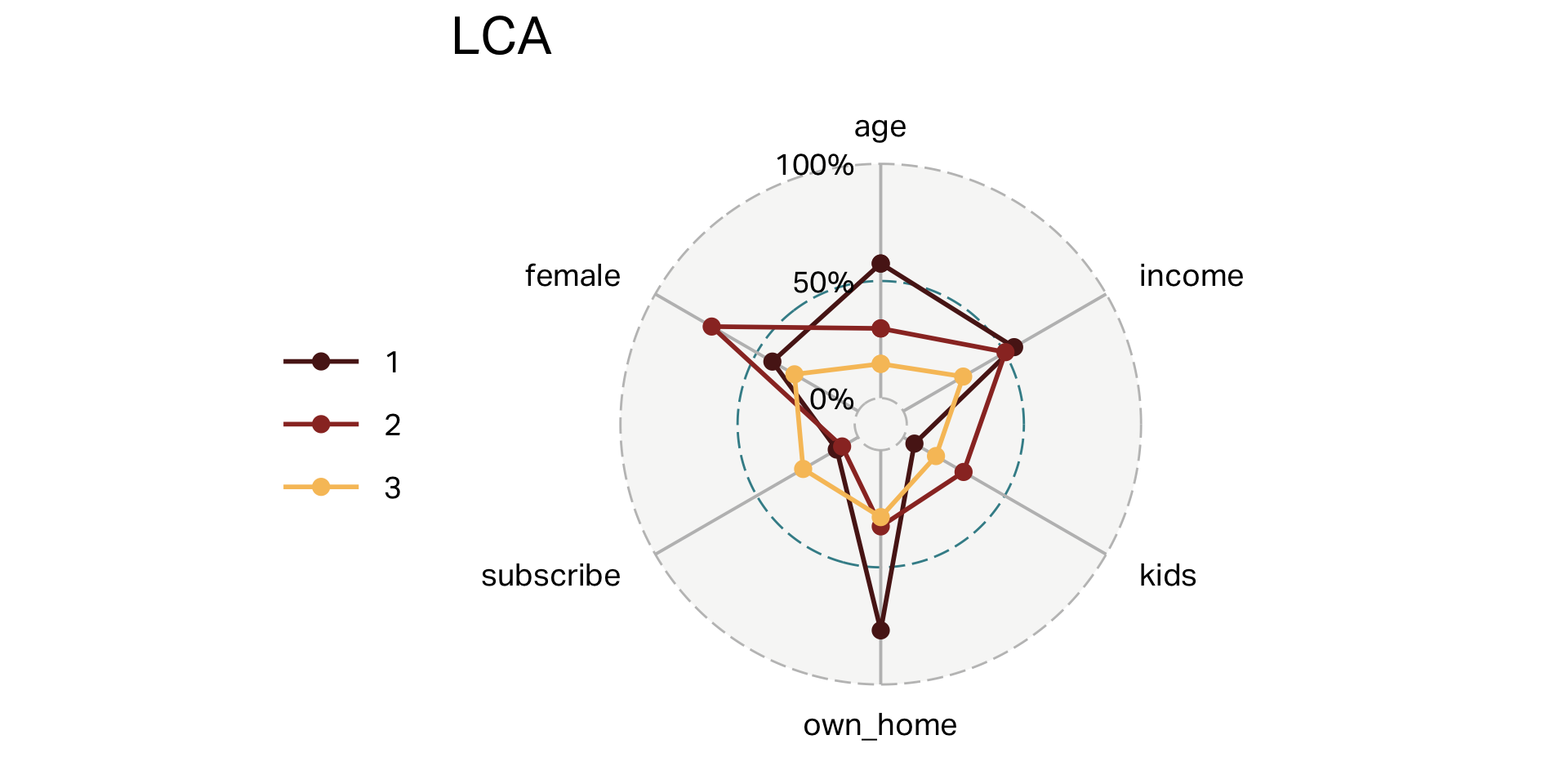

Latent Class Analysis

Latent Class Analysis

- Latent Class Analysis (LCA) identifies unobserved (“latent”) segments based on patterns in categorical or binary variables, using probabilistic modeling.

- Best suited for clustering respondents based on survey or categorical data, particularly when dealing with psychological, attitudinal, or preference measures.

- Differentiated from other methods by modeling underlying segment membership probabilistically, allowing respondents to belong partially (with probabilities) to multiple segments rather than just one.

- Requires larger sample sizes for reliable segment solutions; selecting the number of segments typically involves comparing model-fit statistics (e.g., AIC, BIC).

Clusters (LCA)

| segment | n | age | income | kids | own_home | subscribe | female |

|---|---|---|---|---|---|---|---|

| 1 | 70 | 25 | 24,872 | 1 | 29% | 27% | 31% |

| 2 | 104 | 55 | 58,215 | 0 | 77% | 11% | 42% |

| 3 | 126 | 37 | 55,613 | 2 | 33% | 8% | 72% |

Overlap (LCA)

Fine-Tuning

Overlaps could be because of the choices I made in transforming to categorical data. For simplicity, I chose to divide the data at the median line, but it might be better another way.

# Create a copy of our base dataset

lat_clust_data <- df

# Transform each variable into a categorical (or factor) variable

lat_clust_data$age <- factor(ifelse(lat_clust_data$age < median(lat_clust_data$age), 1, 2))

lat_clust_data$income <- factor(ifelse(lat_clust_data$income < median(lat_clust_data$income), 1, 2))

lat_clust_data$kids <- factor(ifelse(lat_clust_data$kids < median(lat_clust_data$kids), 1, 2))

lat_clust_data$own_home <- factor(lat_clust_data$own_home)

lat_clust_data$female <- factor(lat_clust_data$female)

lat_clust_data$subscribe <- factor(lat_clust_data$subscribe)Clusters

Your turn

![]()

- Huddle up in your groups

- Discuss how you might want to segment the data you are collecting for KCRW, if at all

- Which data would you use for the clustering algorithm?

- Which clustering method would be best? Why?